发布时间:2022.04.13 14:06 访问次数: 作者:

返回列表engineering researchers have developed a hybrid machine-learning approach to muscle gesture recognition in prosthetic hands that combines an ai technique normally used for image recognition with another approach specialized for handwriting and speech recognition. the technique is achieving far superior performance than traditional machine learning efforts.

a paper describing the hybrid approach was published in the journal cyborg and bionic systems on november 8th, 2021.

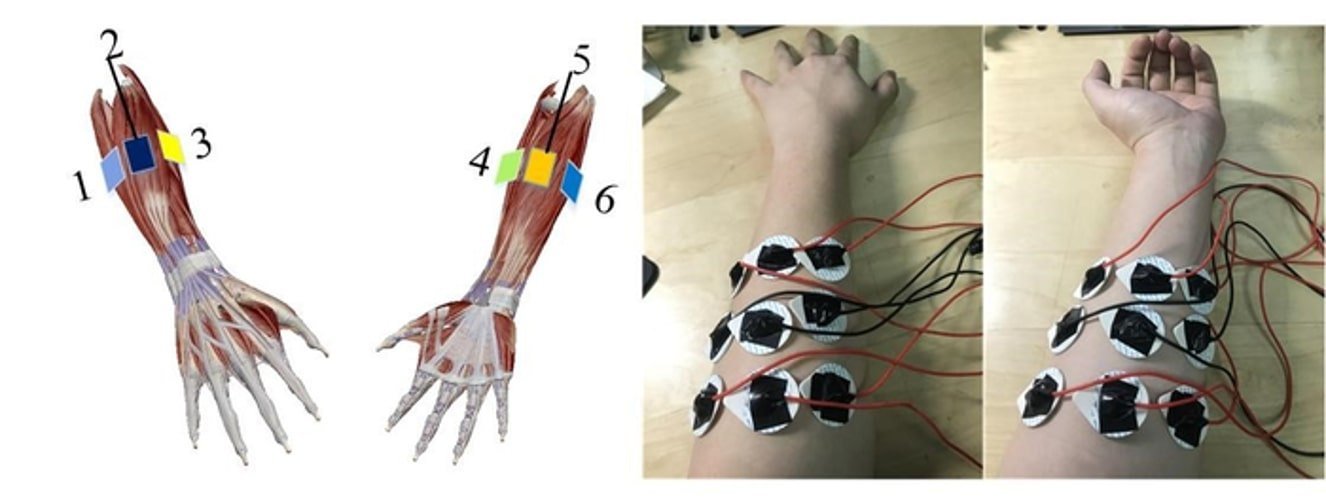

motor neurons are those parts of the central nervous system that directly control our muscles. they transmit electrical signals that cause muscles to contract. electromyography (emg) is a method of measuring muscle response by recording this electrical activity through the insertion of electrode needles through the skin and into the muscle. surface emg (semg) performs this same recording process in a non-invasive fashion with the electrodes placed on the skin above the muscle, and is used for non-medical procedures such as sports and physiotherapy research.

over the last decade, researchers have begun investigating the potential use of surface emg signals to control prostheses for amputees, especially with respect to the complexity of movements and gestures required by prosthetic hands in order to deliver smoother, more responsive, and more intuitive activity of the devices than is currently possible.

unfortunately, unexpected environmental interference such as a shift of the electrodes introduces a great deal of ‘noise’ to the process of any device attempting to recognize the surface emg signals. such shifts regularly occur in daily wear and use of such systems. to try to overcome this problem, users must engage in a lengthy and tiring semg signal training period prior to use of their prostheses. users are required to laboriously collect and classify their own surface emg signals in order to be able to control the prosthetic hand.

in order to reduce or eliminate the challenges of such training, researchers have explored various machine learning approaches—in particular deep learning pattern recognition—to be able to distinguish between different, complex hand gestures and movements despite the presence of environmental signal interference.

a reduction in the training is in turn obtained by optimizing the network structure model of that deep learning. one possible improvement that has been trialed is the use of a convolutional neural network (cnn), which is analogous to the connection structure of the human visual cortex. this type of neural network offers improved performance with images and speech and as such is at the heart of computer vision.

researchers up to now have achieved some success with cnn, significantly improving upon the recognition (‘extraction’) of the spatial dimensions of semg signals related to hand gestures. but while good dealing with space, they struggle with time. gestures are not static phenomena, but take place over time, and cnn ignores time information in the continuous contraction of muscles.

recently, some researchers have begun to apply a long short-term memory (lstm) artificial neural network architecture to the problem. lstm involves a structure that involves ‘feedback’ connections, giving it superior performance in processing classifying, and making predictions based on sequences of data over time, especially where there are lulls, gaps or interferences of unexpected duration between the events that are important. lstm is a form of deep learning that has been best applied to tasks that involve unsegmented, connected activity such as handwriting and speech recognition.

the challenge here is that while researchers have achieved better gesture classification of semg signals, the size of the computational model required is a serious problem. the microprocessor needed to be used is limited. using something more powerful would be too costly. and finally, while such deep learning training models work with the computers in the lab, they are difficult to apply via the sort of embedded hardware found in a prosthetic device

“convolutional neural networks were after all conceived with image recognition in mind, not control of prostheses,” said dianchun bai, one of the authors of the paper and professor of electrical engineering at shenyang university of technology. “we needed to couple cnn with a technique that could deal with the dimension of time, while also ensuring feasibility in the physical device that the user must wear.”

so the researchers developed a hybrid cnn and lstm model that combined the spacial and temporal advantages of the two approaches. this reduced the size of the deep learning model while achieving high accuracy, with more robust resistance to interference.

after developing their system, they tested the hybrid approach on ten non-amputee subjects engaging in a series of 16 different gestures such as gripping a phone, holding a pen, pointing, pinching and grasping a cup of water. the results demonstrated far superior performance compared to cnn alone or other traditional machine learning methods, achieving a recognition accuracy of over 80 percent.

the hybrid approach did however struggle to accurately recognize two pinching gestures: a pinch using the middle finger and one using the index finger. in future efforts, the researchers want to optimize the algorithm and improve its accuracy still further, while keeping the training model small so it can be used in prosthesis hardware. they also want to figure out what is prompting the difficulty in recognizing pinching gestures and expand their experiments to a much larger number of subjects.

ultimately, the researchers want to develop a prosthetic hand that is as flexible and reliable as a user’s original limb.

原文链接:

tel: 021-63210200

业务咨询: info@oymotion.com

销售代理: sales@oymotion.com

凯发k8一触即发的技术支持: faq@oymotion.com

加入傲意: hr@oymotion.com

上海地址: 上海市浦东新区广丹路222弄2号楼6层

厦门地址: 厦门市集美区百通科技园1号楼301-1室

微信号:oymotion

扫描二维码,获取更多相关资讯